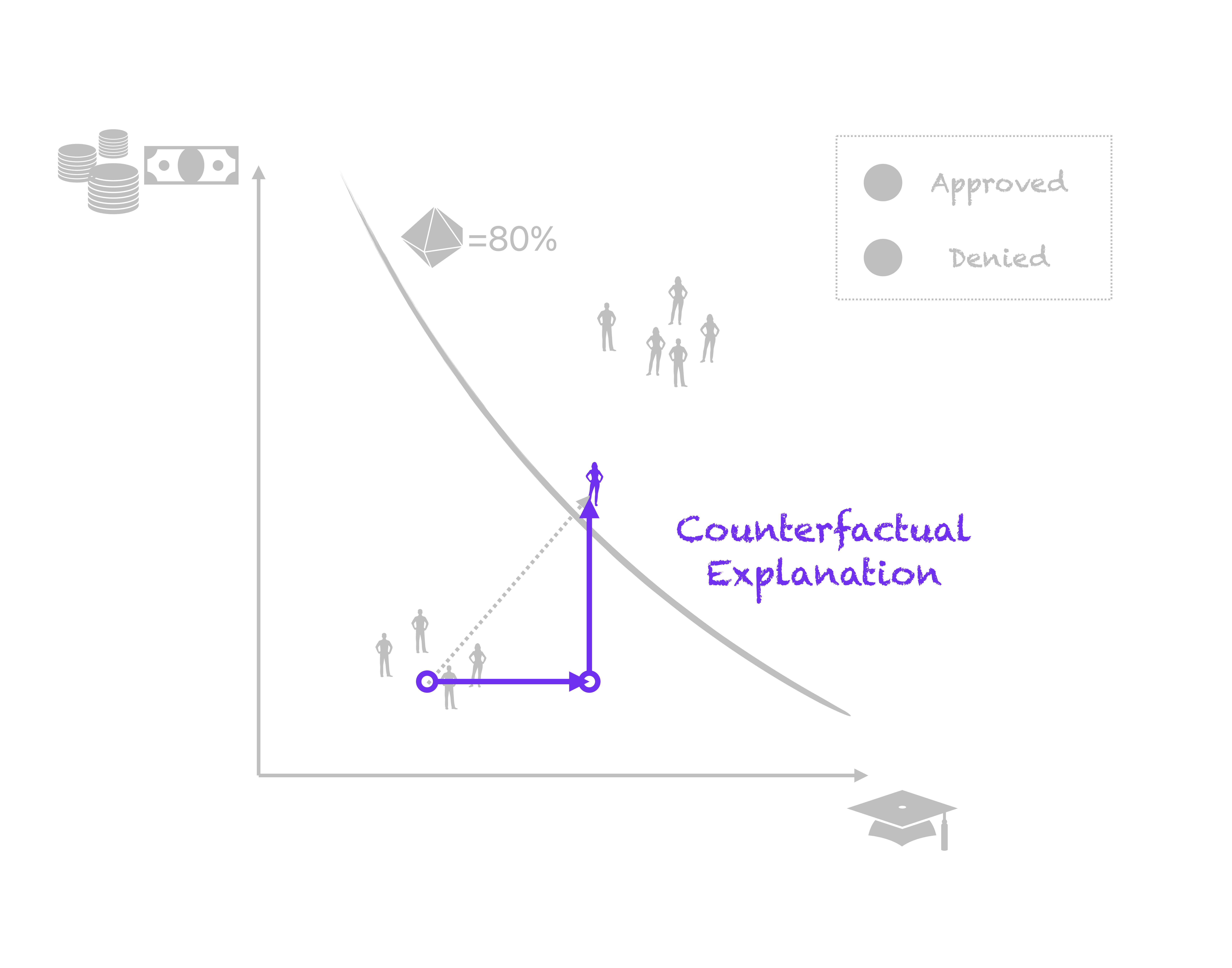

Counterfactual Explanations and Algorithmic Recourse for Trustworthy AI

Research Seminar

Patrick Altmeyer

Delft University of Technology

March 11, 2026

The Ground Truth (Reality)

The Ground Truth (Reality)

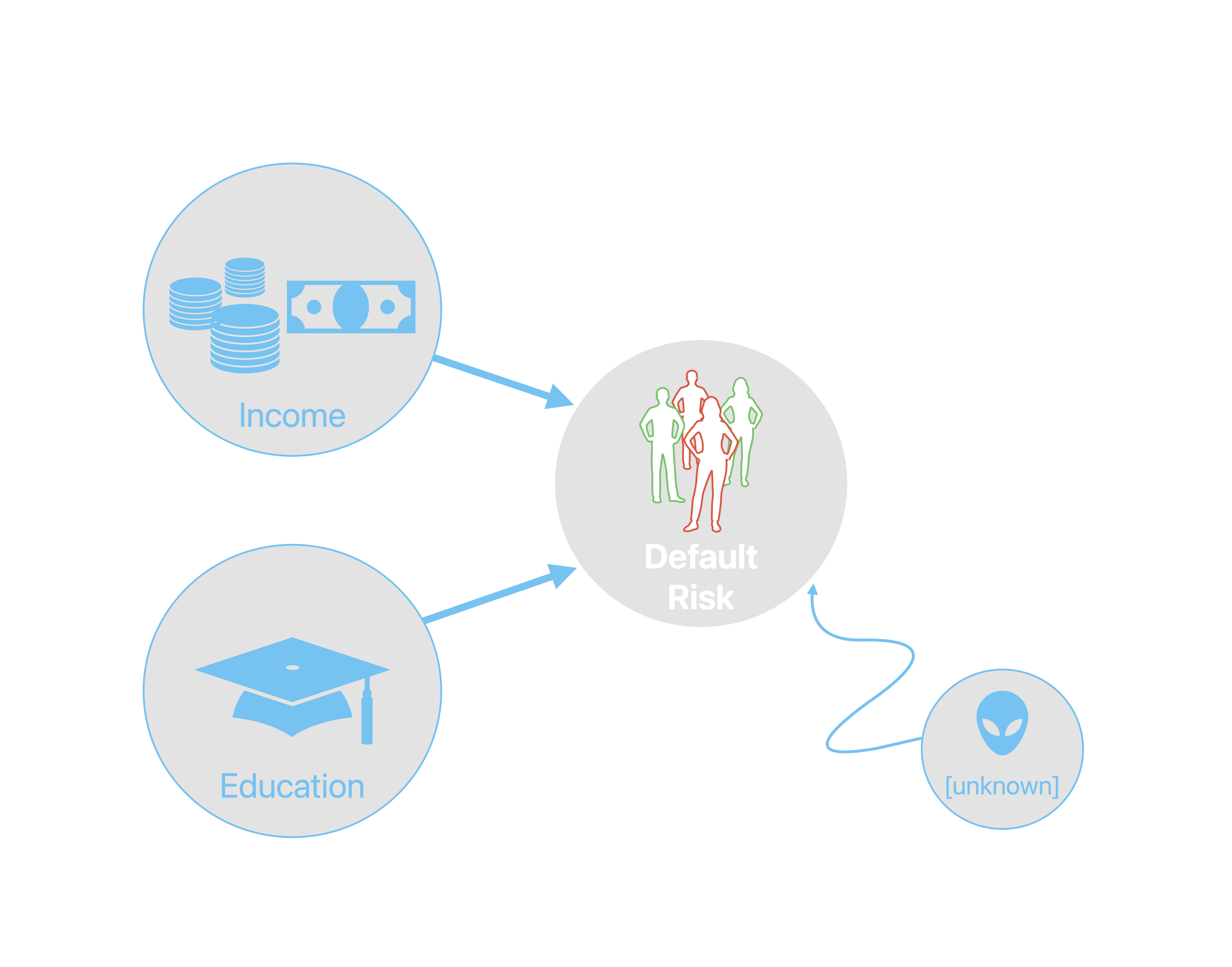

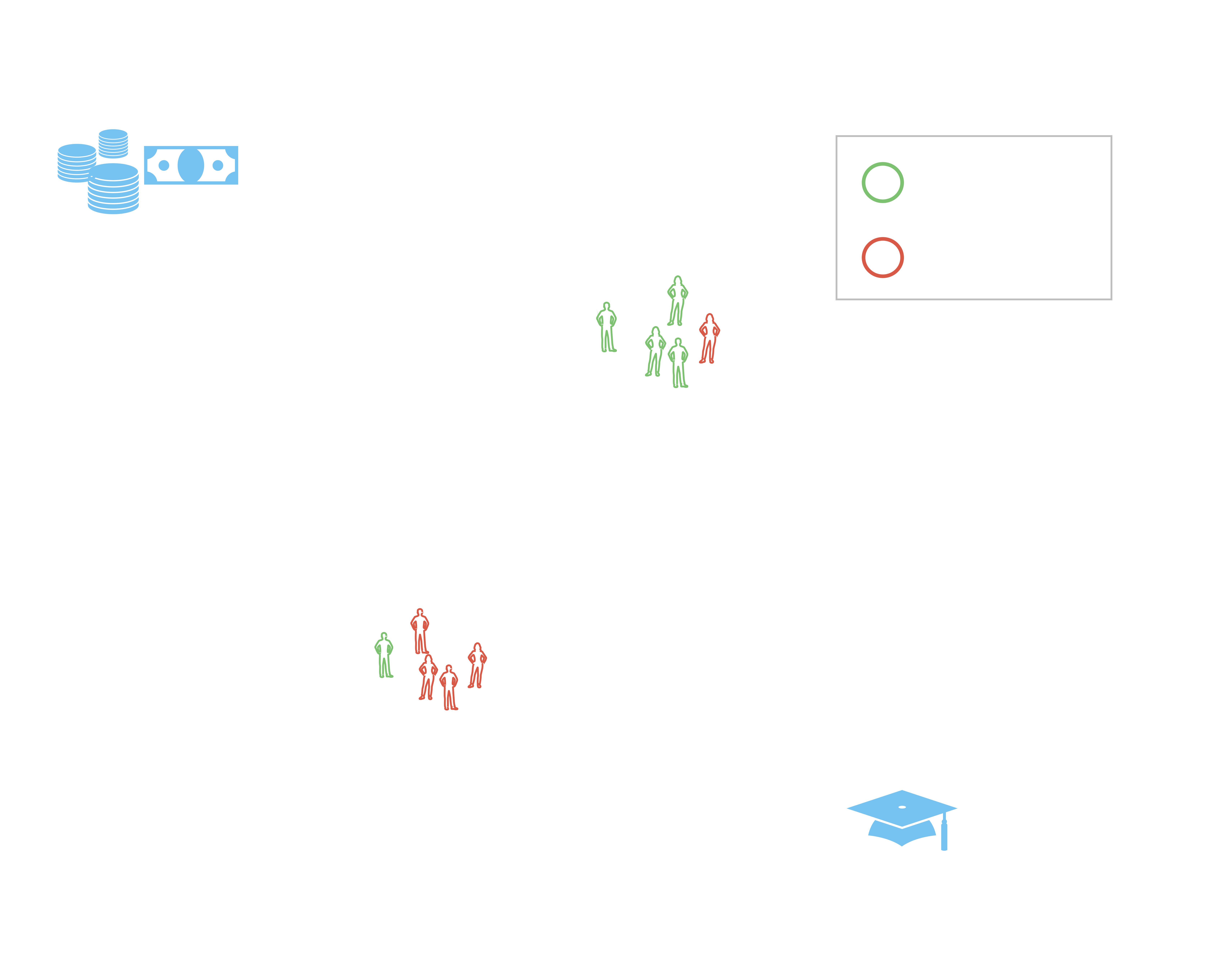

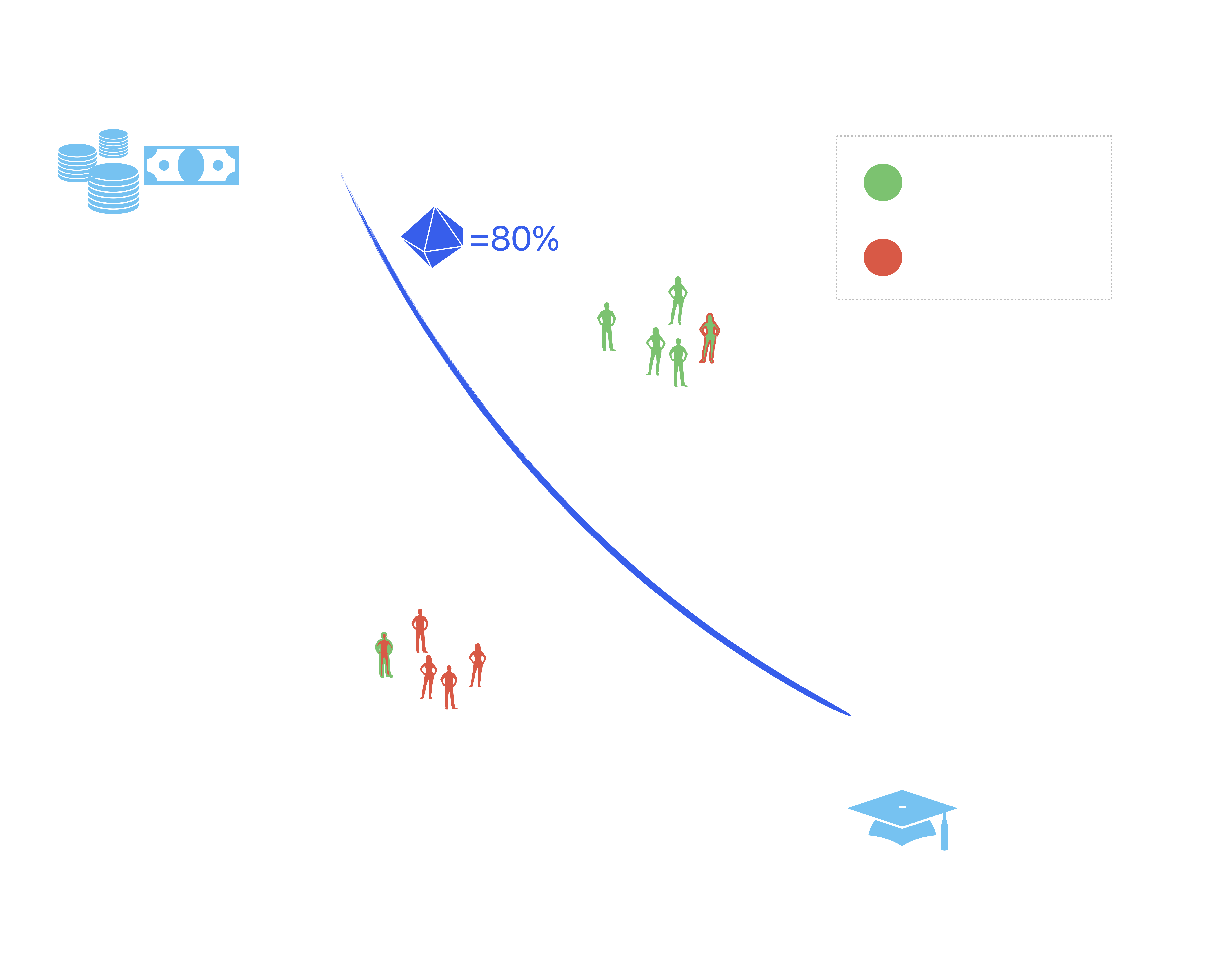

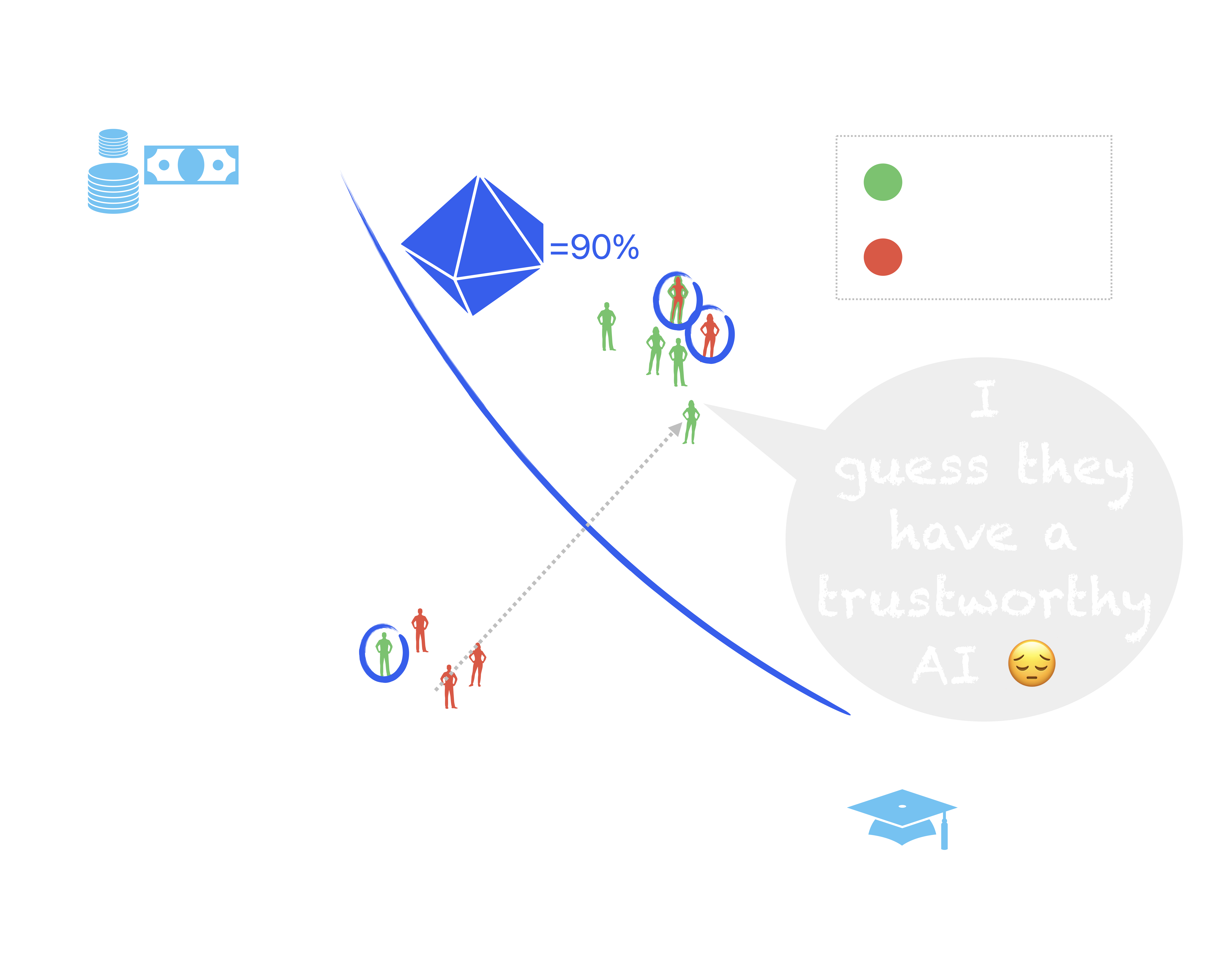

Black-Box AI

Black-Box AI

Black-Box AI

Black-Box AI

Black-Box AI

Big, Beautiful Black-Box AI

Big, Beautiful Black-Box AI

Big, Beautiful Black-Box AI

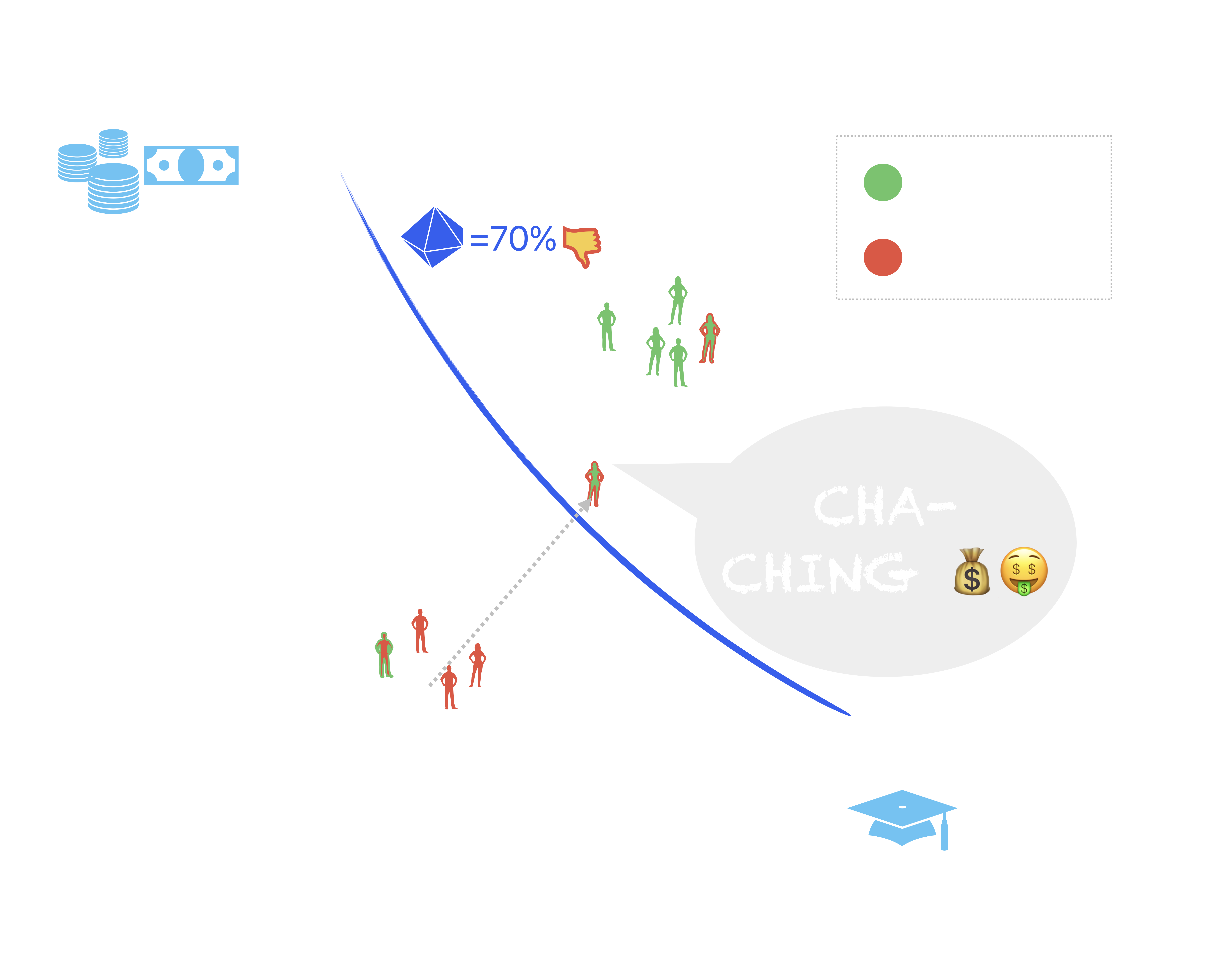

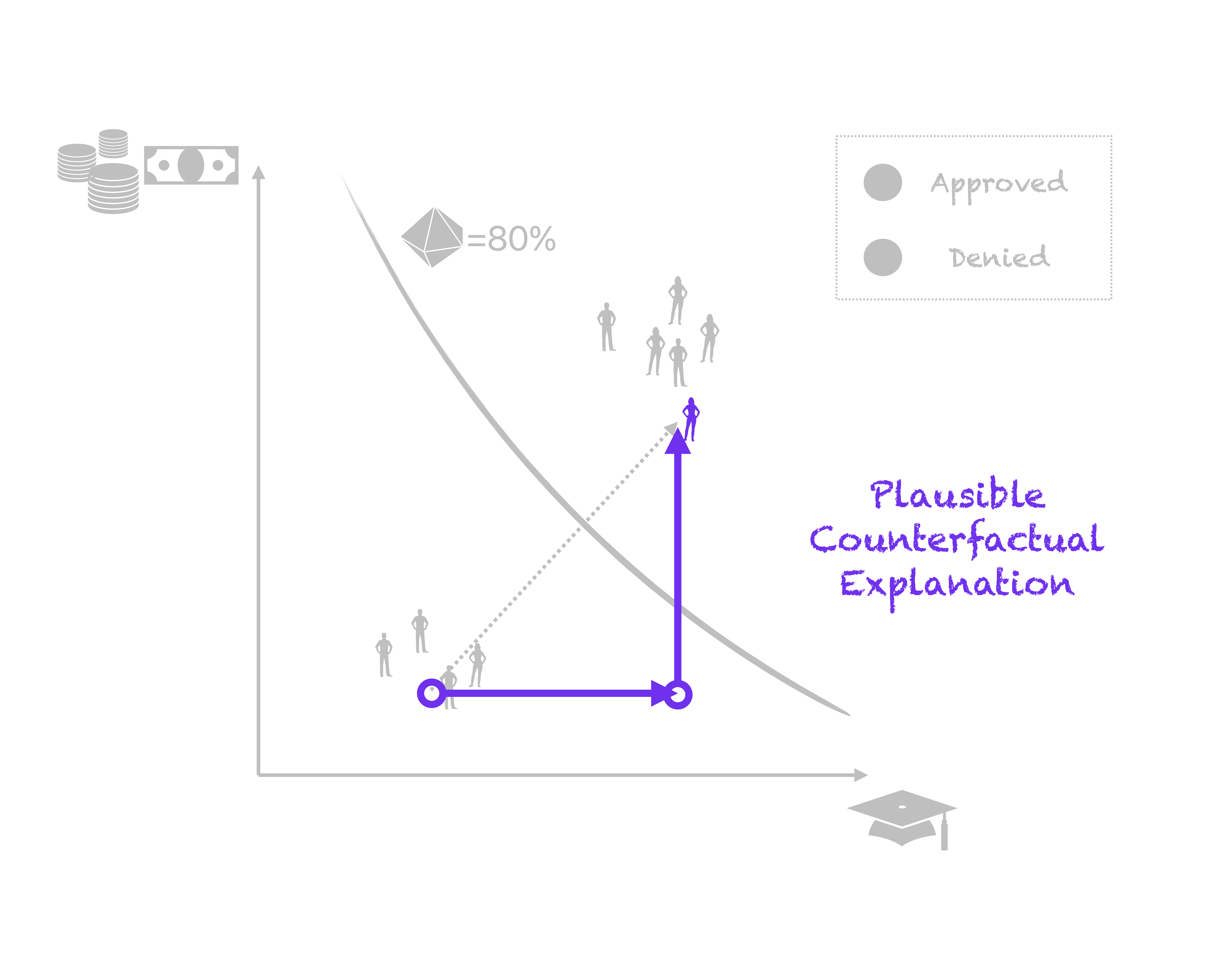

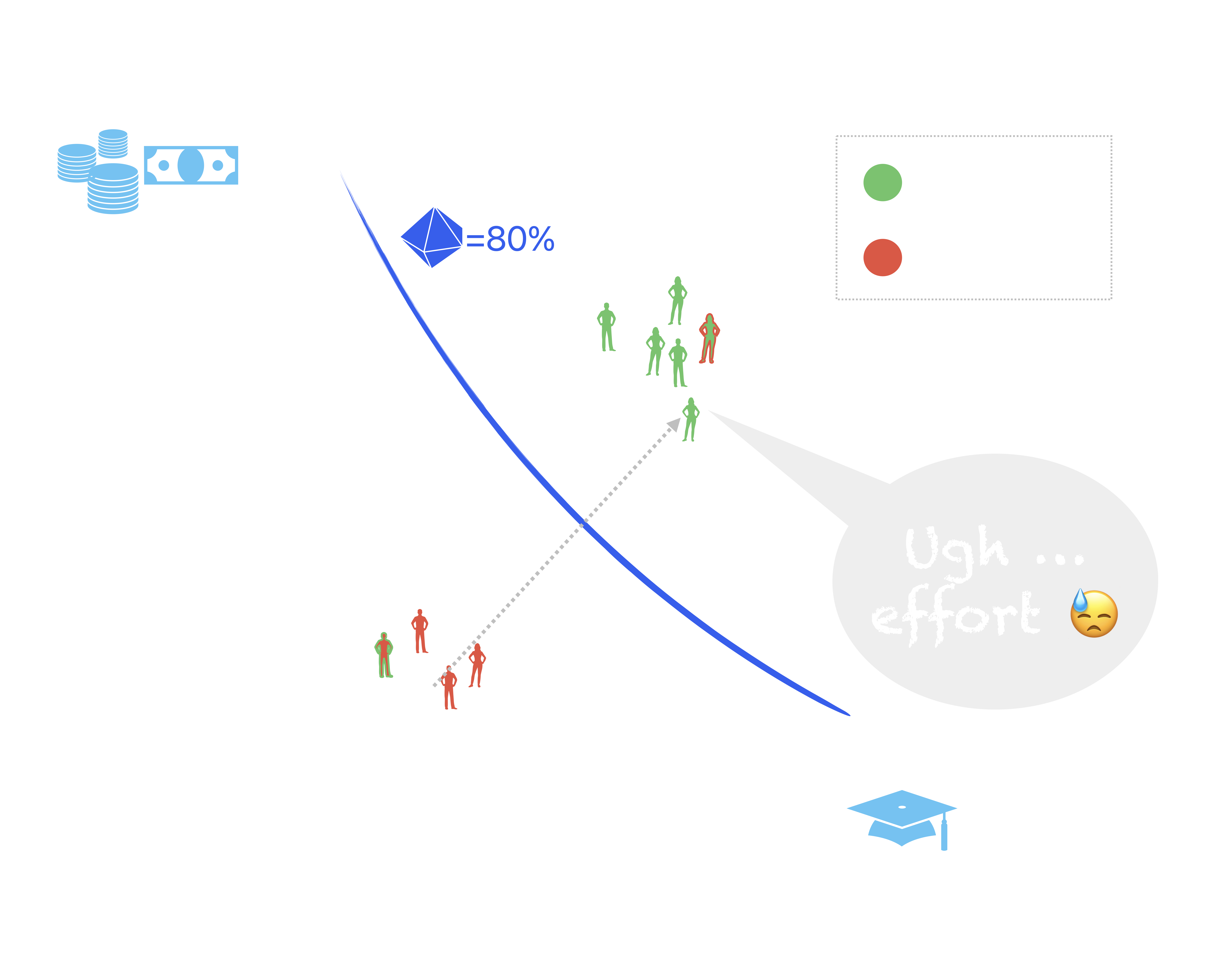

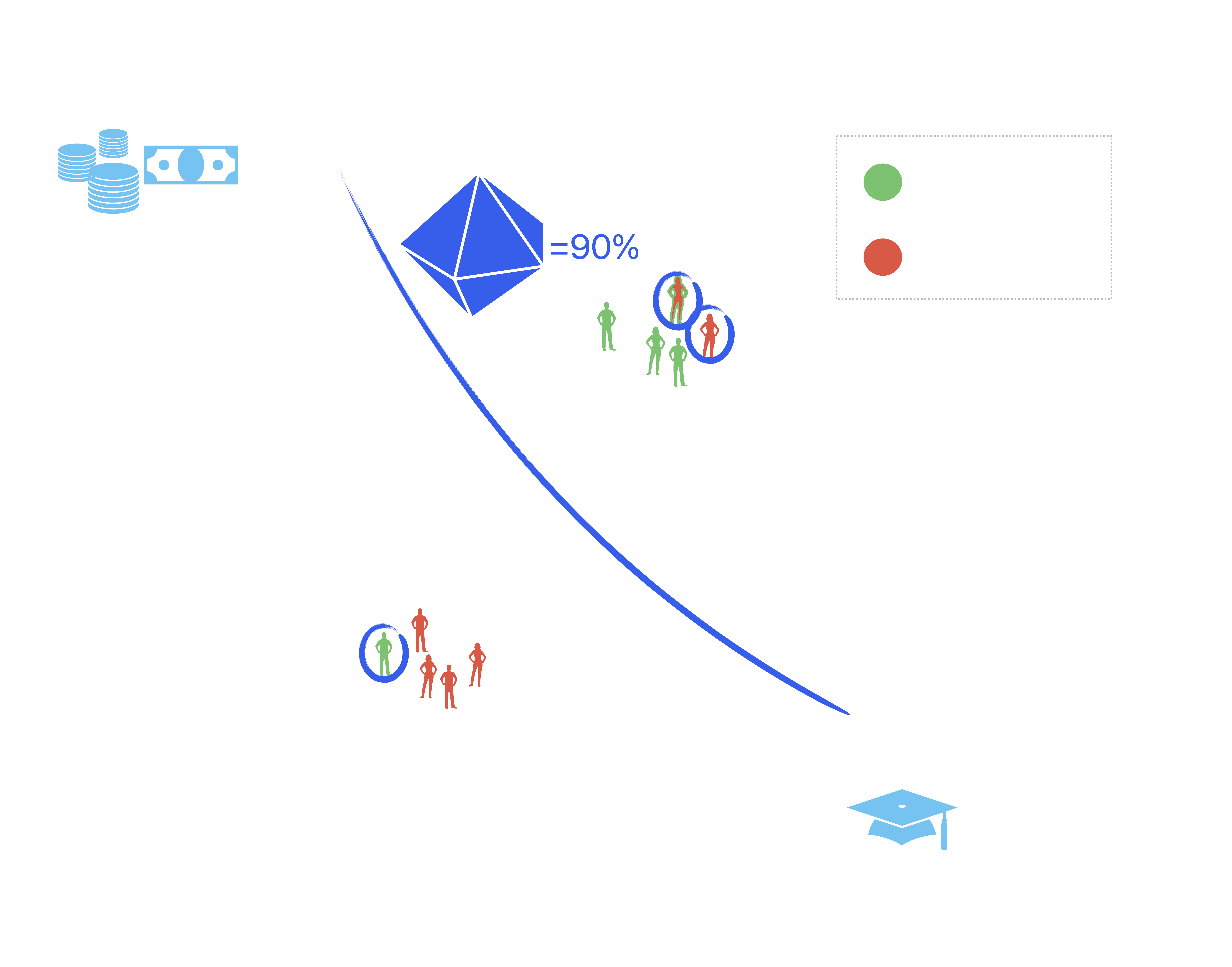

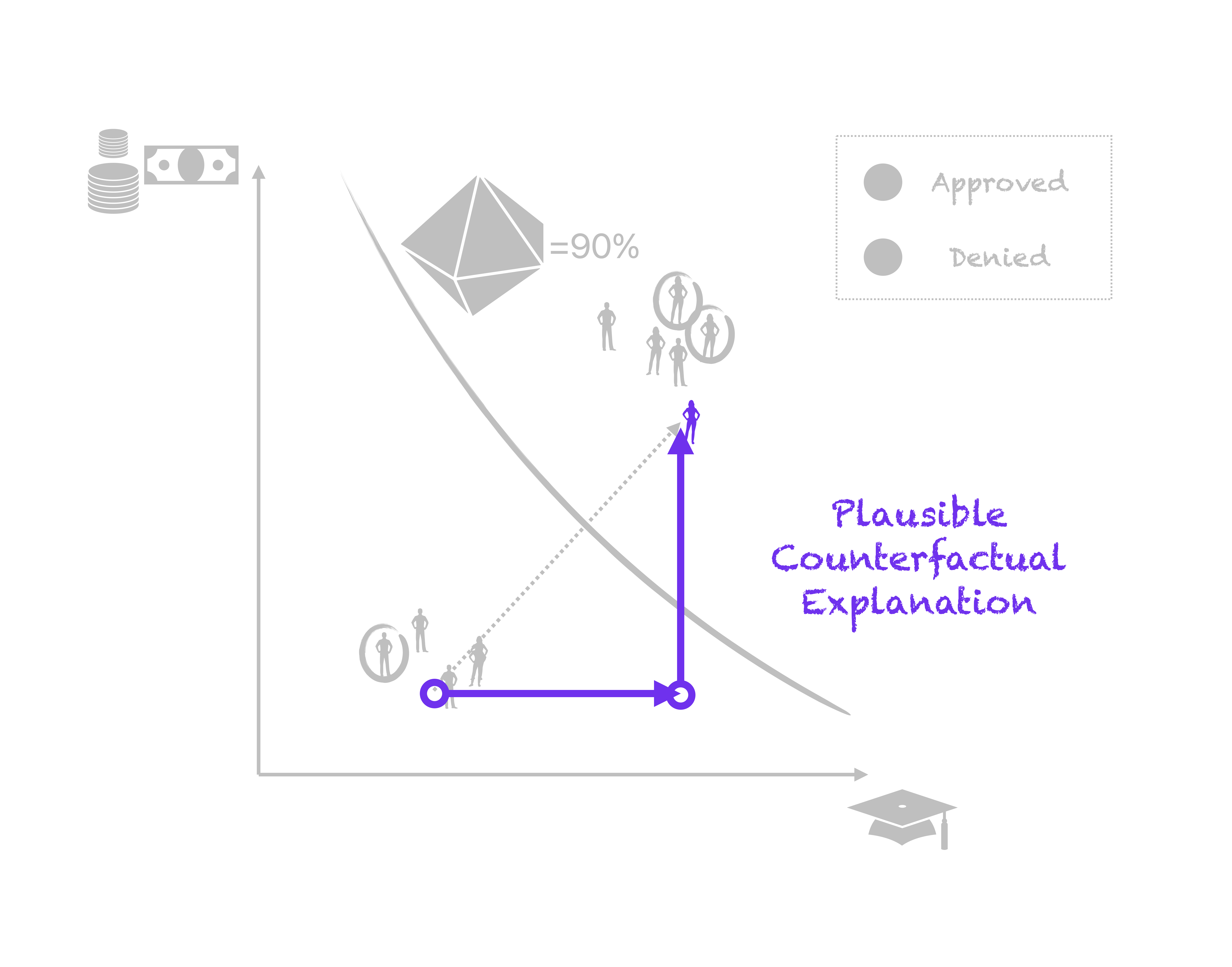

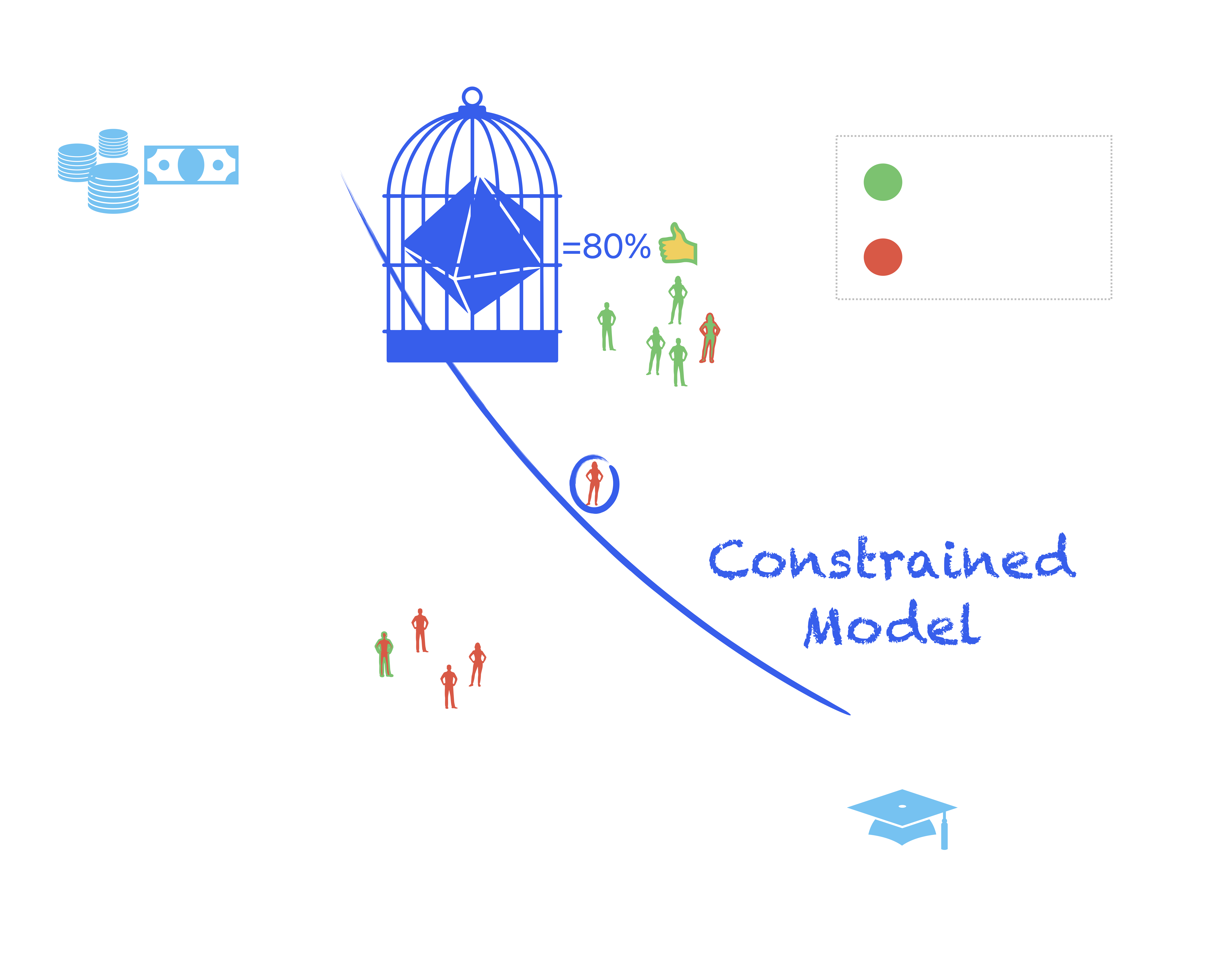

Holding Models Accountable

‘ok but agi bruh’

In all seriousness …

- Useful? Absolutely

- AGI? Sentient? Conscious? No: ‘emergence’ in complex systems does not hint at any of this

In all seriousness …

- Emergence broadly described as broad behaviour of complex systems that’s different from its constituent parts:

- Example 1: asset price bubbles in financial markets -> locally predictable, rational behaviour, but also market failure

- Example 2: tornado -> just dust and debris, but also a possible disaster

- Does it matter?