Explaining Models or Modelling Explanations

Counterfactual Explanations and Algorithmic Recourse for Trustworthy AI

Delft University of Technology

April 16, 2026

Background

Economist, then PhD CS

How can we make opaque AI more trustworthy?

Explainable AI, Adversarial ML, Probabilistic ML

Core developer and maintainer of Taija (Trustworthy AI in Julia)

Agenda

- Intro: counterfactual explanations (CE) and algorithmic recourse (AR)

- Unexpected Challenges: endogenous dynamics of AR

- Paradigm Shift: explanations should be faithful first, plausible second

- New Opportunities: teaching models plausible explanations through CE

Intro

Training Opaque Models

Tweaking Parameters

Objective:

\[ \begin{aligned} \min_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} \} \end{aligned} \]

Training Opaque Models

Tweaking Parameters

Objective:

\[ \begin{aligned} \min_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} \} \end{aligned} \]

Solution:

\[ \begin{aligned} \theta_{t+1} &= \theta_t - \nabla_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} \} \\ \textcolor{orange}{\theta^*}&=\theta_T \end{aligned} \]

Explaining Opaque Models

Tweaking Inputs

Explaining Opaque Models

Tweaking Inputs

Objective:

\[ \begin{aligned} \min_{\textcolor{purple}{\mathbf{x}}} \{ {\text{yloss}(M_{\textcolor{orange}{\theta^*}}(\mathbf{x}),\mathbf{y^{\textcolor{purple}{+}} }) + \lambda \text{reg}(\mathbf{x};\cdot)} \} \end{aligned} \]

Solution:

\[ \begin{aligned} \mathbf{x}_{t+1} &= \mathbf{x}_t - \nabla_{\textcolor{purple}{\mathbf{x}}} \{ \text{yloss}(M_{\textcolor{orange}{\theta^*}}(\mathbf{x}),\mathbf{y^{\textcolor{purple}{+}} }) \\&+ \lambda \text{reg}(\mathbf{x};\cdot) \} \\ \textcolor{purple}{\mathbf{x}^*}&=\mathbf{x}_T \end{aligned} \]

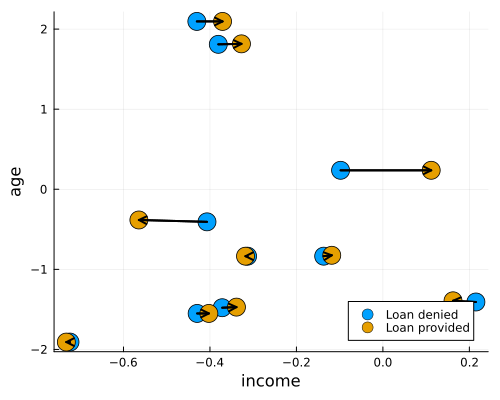

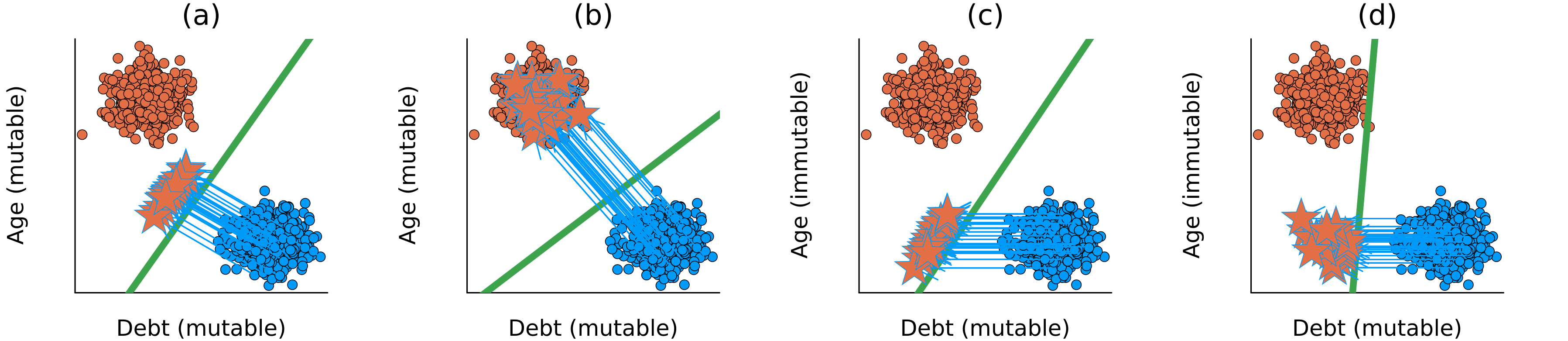

Algorithmic Recourse

Provided CE is valid, plausible and actionable, it can be used to provide recourse to individuals negatively affected by models.

“If your income had been

x, then …”

Unexpected Challenges

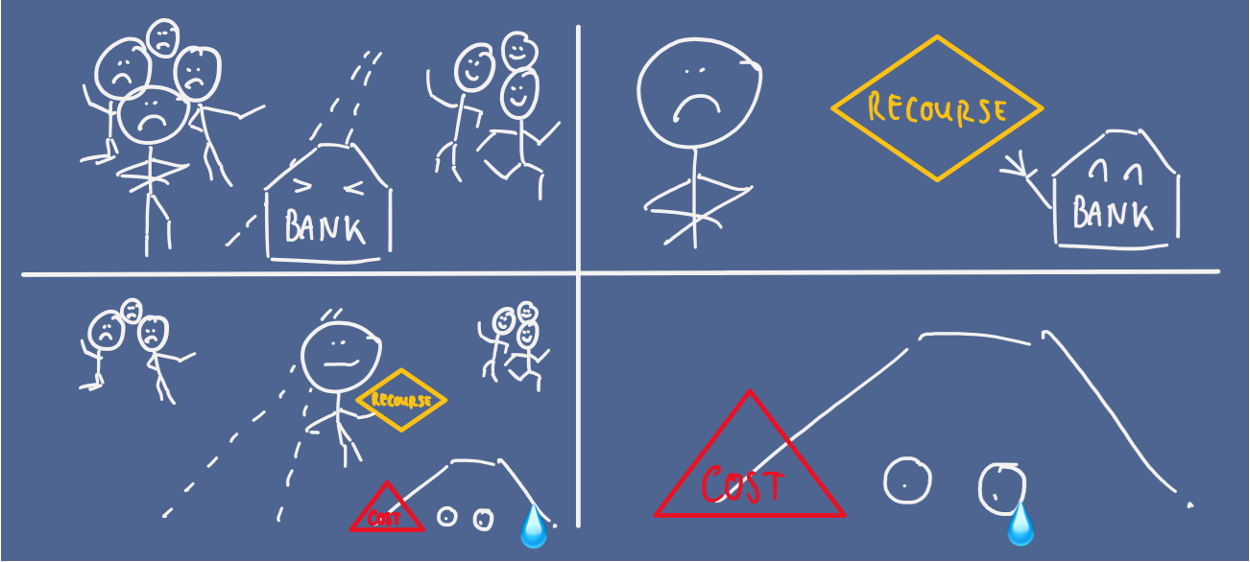

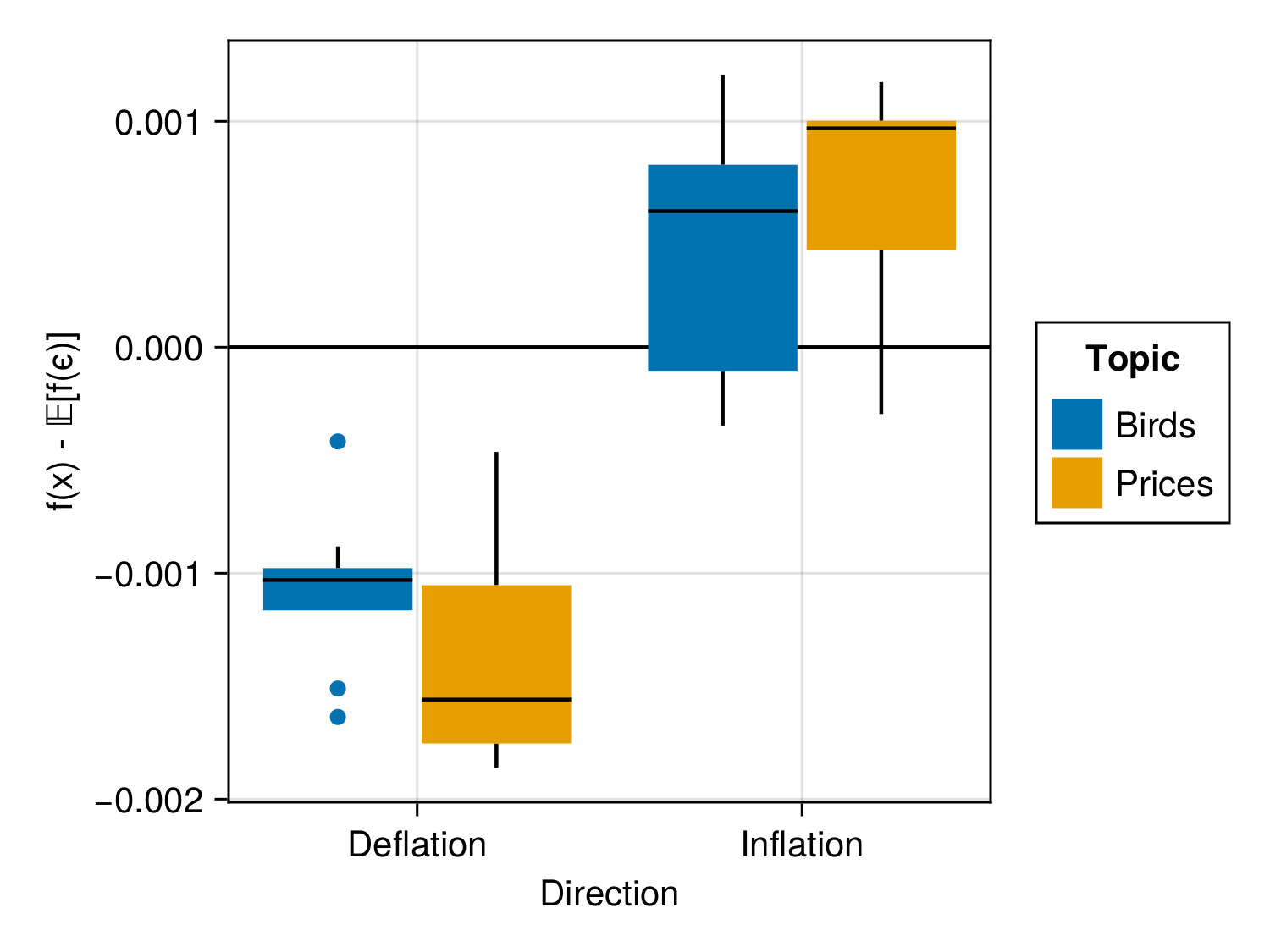

Hidden Cost of Implausibility

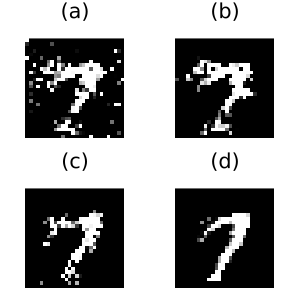

Insight: Implausible Explanations Are Costly

Mitigation Strategies

Reframed Objective

\[ \begin{aligned} \mathbf{s}^\prime &= \arg \min_{\mathbf{s}^\prime \in \mathcal{S}} \{ {\text{yloss}(M(f(\mathbf{s}^\prime)),y^*)} \\ &+ \lambda_1 {\text{cost}(f(\mathbf{s}^\prime))} + \lambda_2 {\text{extcost}(f(\mathbf{s}^\prime))} \} \end{aligned} \]

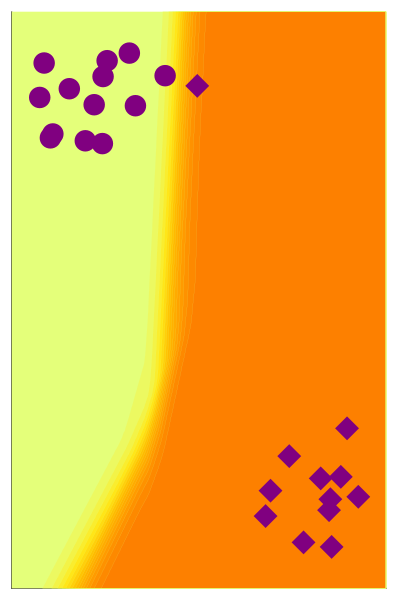

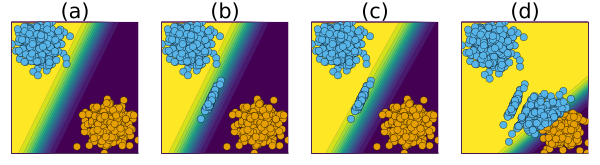

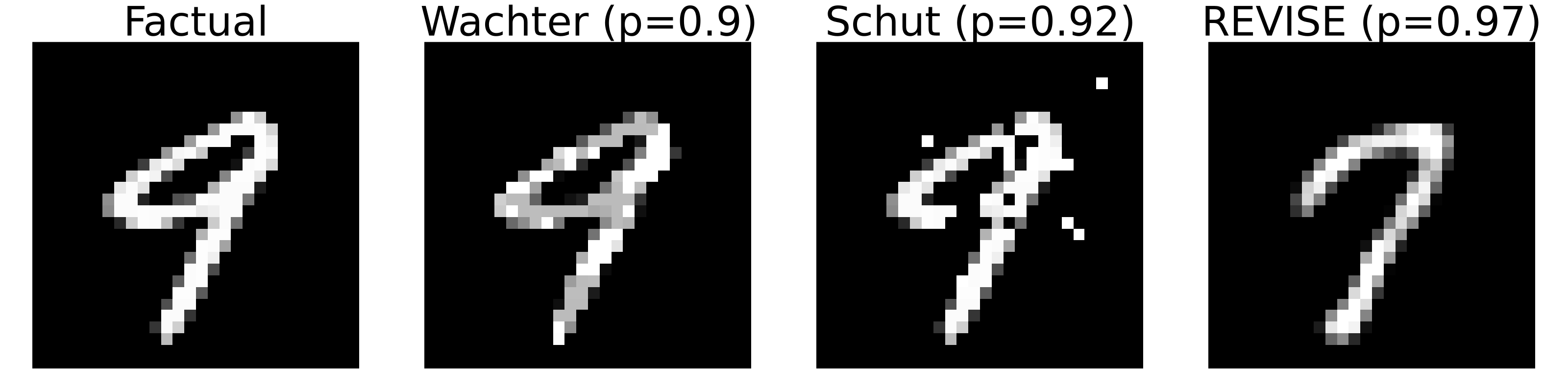

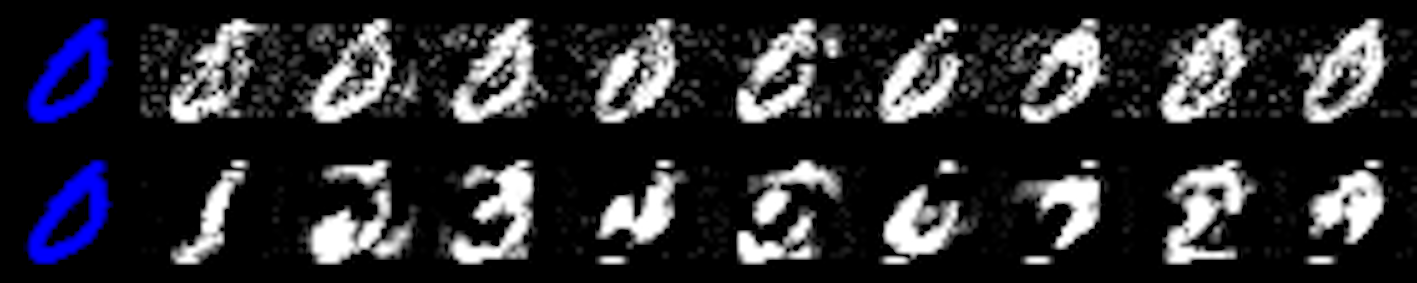

Explanation or Adversarial Example?

Plausibility at all cost?

All of these counterfactuals are valid explanations for the model’s prediction.

Pick your poison …

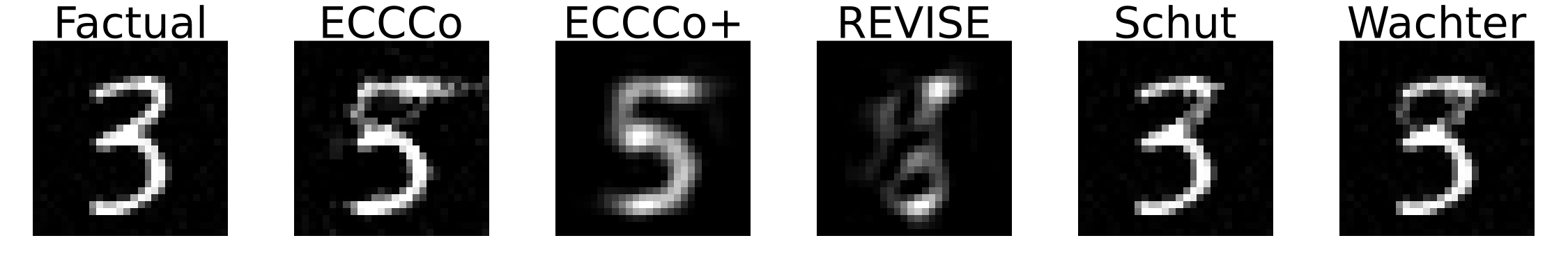

Faithful First, Plausible Second

Putting it all together

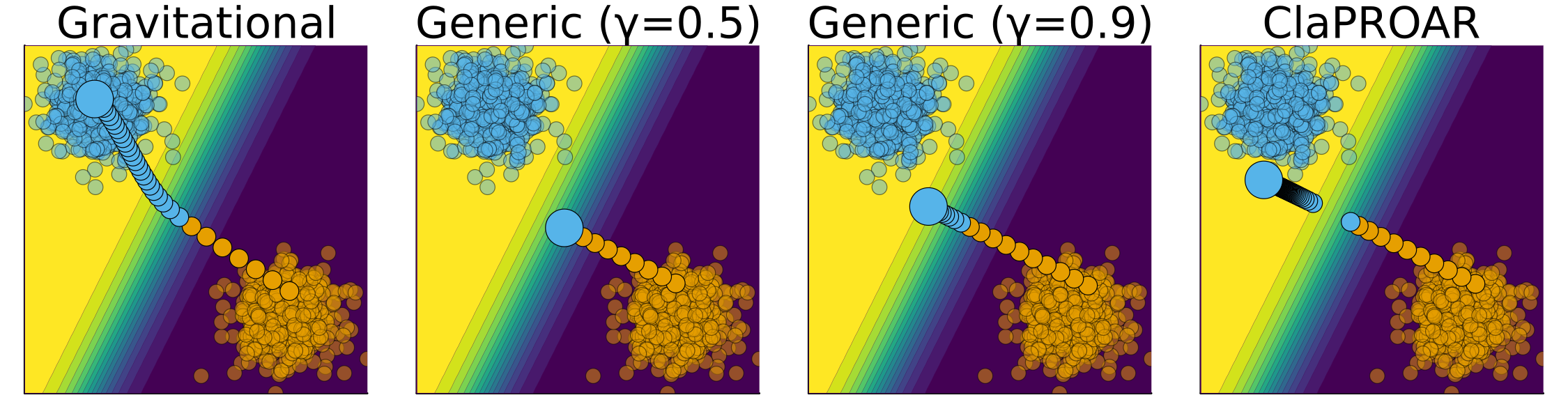

Counterfactual Training

First, Tweaking Inputs1

\[ \begin{aligned} \mathbf{x}_{t+1} &= \mathbf{x}_t - \nabla_{\textcolor{purple}{\mathbf{x}}} \{ {ECCCo(M_{\textcolor{orange}{\theta^*}}(\mathbf{x}),\mathbf{y^{\textcolor{purple}{+}} })} \} \\ \textcolor{purple}{\mathbf{x}^*}&=\mathbf{x}_T \end{aligned} \]

Then, Tweaking Parameters

\[ \begin{aligned} \theta_{t+1} &= \theta_t - \nabla_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} + \text{div}(\textcolor{purple}{\mathbf{x}^*},\mathbf{x}^+,y^+; \theta) \} \\ \textcolor{orange}{\theta^*}&=\theta_T \end{aligned} \]

Counterfactual Training

- Contrast faithful CE with data \(\rightarrow\) Explainability \(\uparrow\)

- Feature mutability constraints \(\rightarrow\) Actionability \(\uparrow\)(holds provably under certain assumptions)

- Bonus: use nascent CE as AE \(\rightarrow\) Robustness \(\uparrow\)

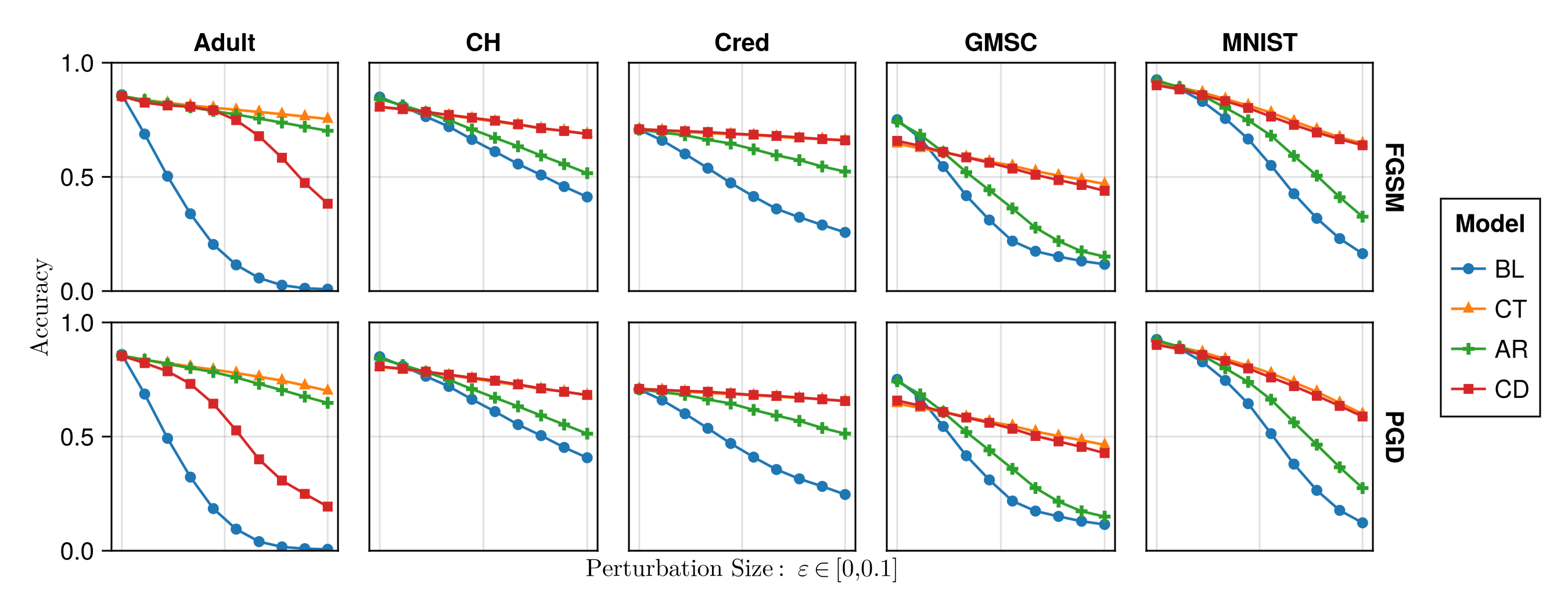

Counterfactual Training: Results

The Hard Numbers

Extensive experiments and ablation studies on nine datasets–synthetic, tabular and vision–generating millions of counterfactuals:1

- Plausibility of CEs increases by up to 90%.

- Actionability: cost of reaching valid counterfactuals with protected features decreases by 19% on average.

- Models’ adversarial robustness improves consistently.

Check it out!

Taija

- Model Explainability (CounterfactualExplanations.jl)

- Predictive Uncertainty Quantification (ConformalPrediction.jl)

- Effortless Bayesian Deep Learning (LaplaceRedux.jl)

- … and more!

- Work presented @ JuliaCon 2022, 2023, 2024.

- Google Summer of Code and Julia Season of Contributions 2024.

- Total of three software projects @ TU Delft.

Trustworthy AI in Julia: github.com/JuliaTrustworthyAI