Counterfactual Training

Teaching Models Plausible and Actionable Explanations

Delft University of Technology

March 23, 2026

Training Opaque Models

Tweaking Parameters

Objective:

\[ \begin{aligned} \min_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} \} \end{aligned} \]

Training Opaque Models

Tweaking Parameters

Objective:

\[ \begin{aligned} \min_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} \} \end{aligned} \]

Solution:

\[ \begin{aligned} \theta_{t+1} &= \theta_t - \nabla_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} \} \\ \textcolor{orange}{\theta^*}&=\theta_T \end{aligned} \]

Explaining Opaque Models

Tweaking Inputs

Explaining Opaque Models

Tweaking Inputs

Objective:

\[ \begin{aligned} \min_{\textcolor{purple}{\mathbf{x}}} \{ {\text{yloss}(M_{\textcolor{orange}{\theta^*}}(\mathbf{x}),\mathbf{y^{\textcolor{purple}{+}} }) + \lambda \text{reg}(\mathbf{x};\cdot)} \} \end{aligned} \]

Solution:

\[ \begin{aligned} \mathbf{x}_{t+1} &= \mathbf{x}_t - \nabla_{\textcolor{purple}{\mathbf{x}}} \{ \text{yloss}(M_{\textcolor{orange}{\theta^*}}(\mathbf{x}),\mathbf{y^{\textcolor{purple}{+}} }) \\&+ \lambda \text{reg}(\mathbf{x};\cdot) \} \\ \textcolor{purple}{\mathbf{x}^*}&=\mathbf{x}_T \end{aligned} \]

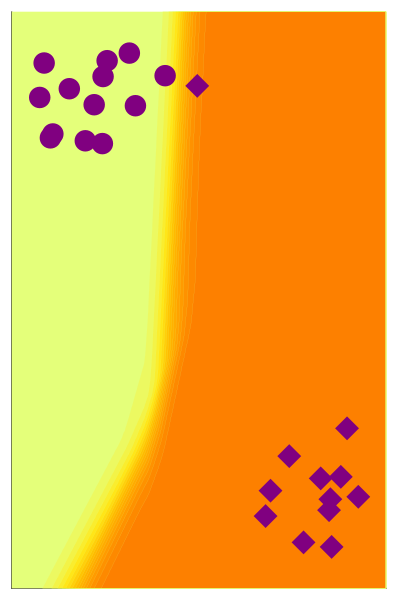

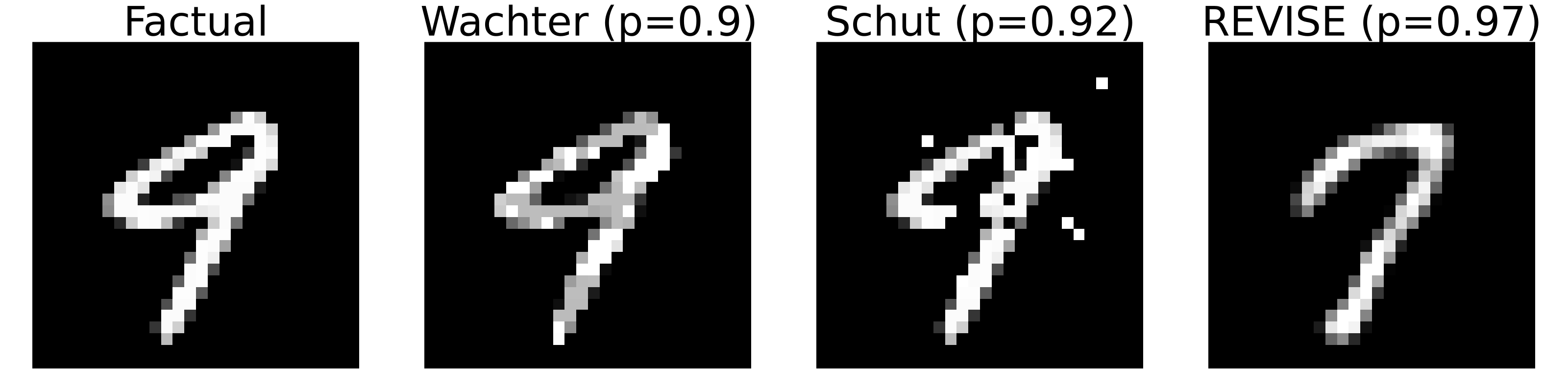

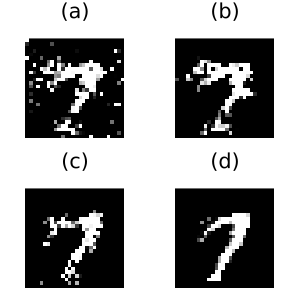

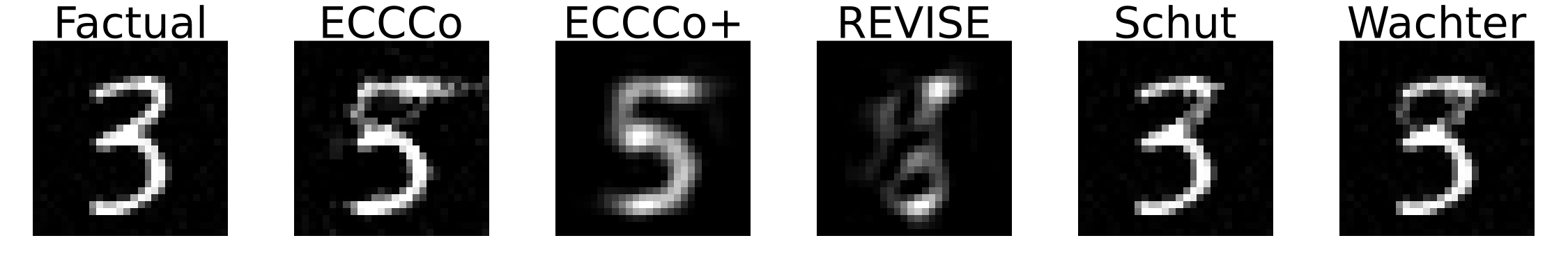

Explanation or Adversarial Example?

Plausibility at all cost?

All of these counterfactuals are valid explanations for the model’s prediction.

Pick your poison …

Faithful First, Plausible Second

Putting it all together

Counterfactual Training

First, Tweaking Inputs1

\[ \begin{aligned} \mathbf{x}_{t+1} &= \mathbf{x}_t - \nabla_{\textcolor{purple}{\mathbf{x}}} \{ {ECCCo(M_{\textcolor{orange}{\theta^*}}(\mathbf{x}),\mathbf{y^{\textcolor{purple}{+}} })} \} \\ \textcolor{purple}{\mathbf{x}^*}&=\mathbf{x}_T \end{aligned} \]

Then, Tweaking Parameters

\[ \begin{aligned} \theta_{t+1} &= \theta_t - \nabla_{\textcolor{orange}{\theta}} \{ {\text{yloss}(M_{\theta}(\mathbf{x}),\mathbf{y})} + \text{div}(\textcolor{purple}{\mathbf{x}^*},\mathbf{x}^+,y^+; \theta) \} \\ \textcolor{orange}{\theta^*}&=\theta_T \end{aligned} \]

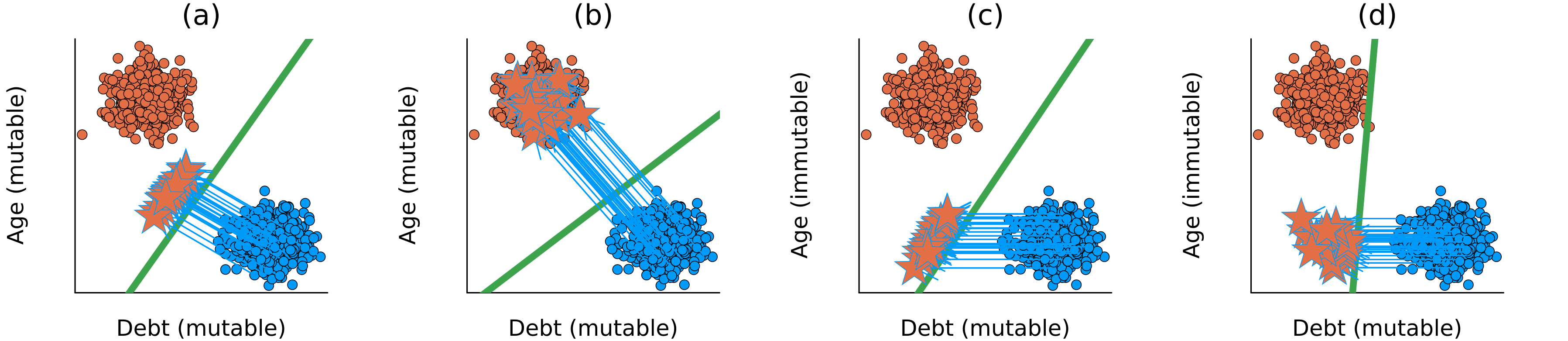

Counterfactual Training

- Contrast faithful CE with data \(\rightarrow\) Explainability \(\uparrow\)

- Feature mutability constraints \(\rightarrow\) Actionability \(\uparrow\)(holds provably under certain assumptions)

- Bonus: use nascent CE as AE \(\rightarrow\) Robustness \(\uparrow\)

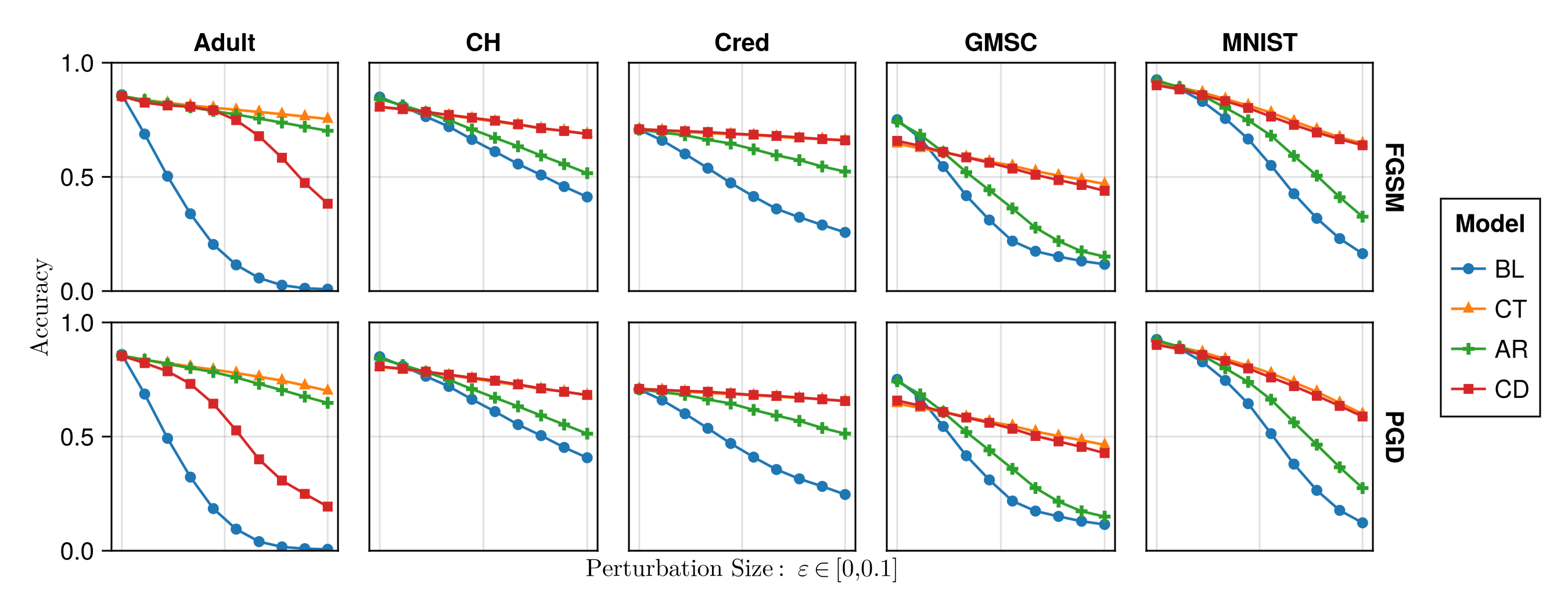

Counterfactual Training: Results

The Hard Numbers

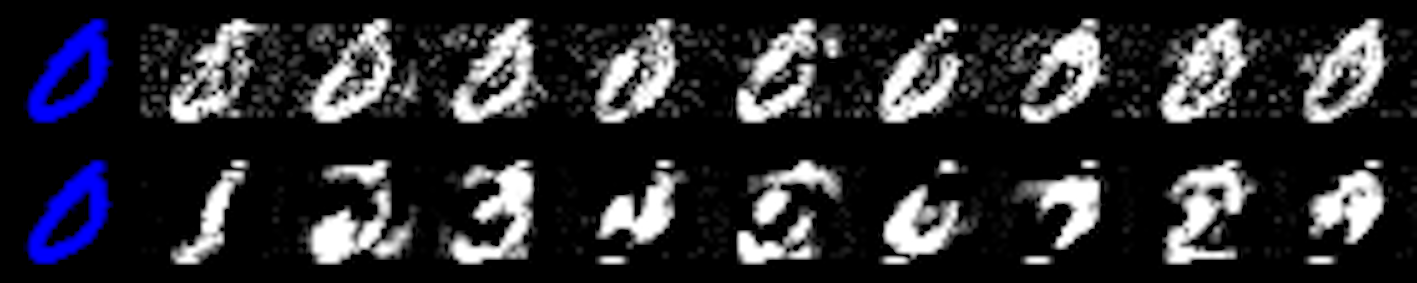

Extensive experiments and ablation studies on nine datasets–synthetic, tabular and vision–generating millions of counterfactuals:1

- Plausibility of CEs increases by up to 90%.

- Actionability: cost of reaching valid counterfactuals with protected features decreases by 19% on average.

- Models’ adversarial robustness improves consistently.